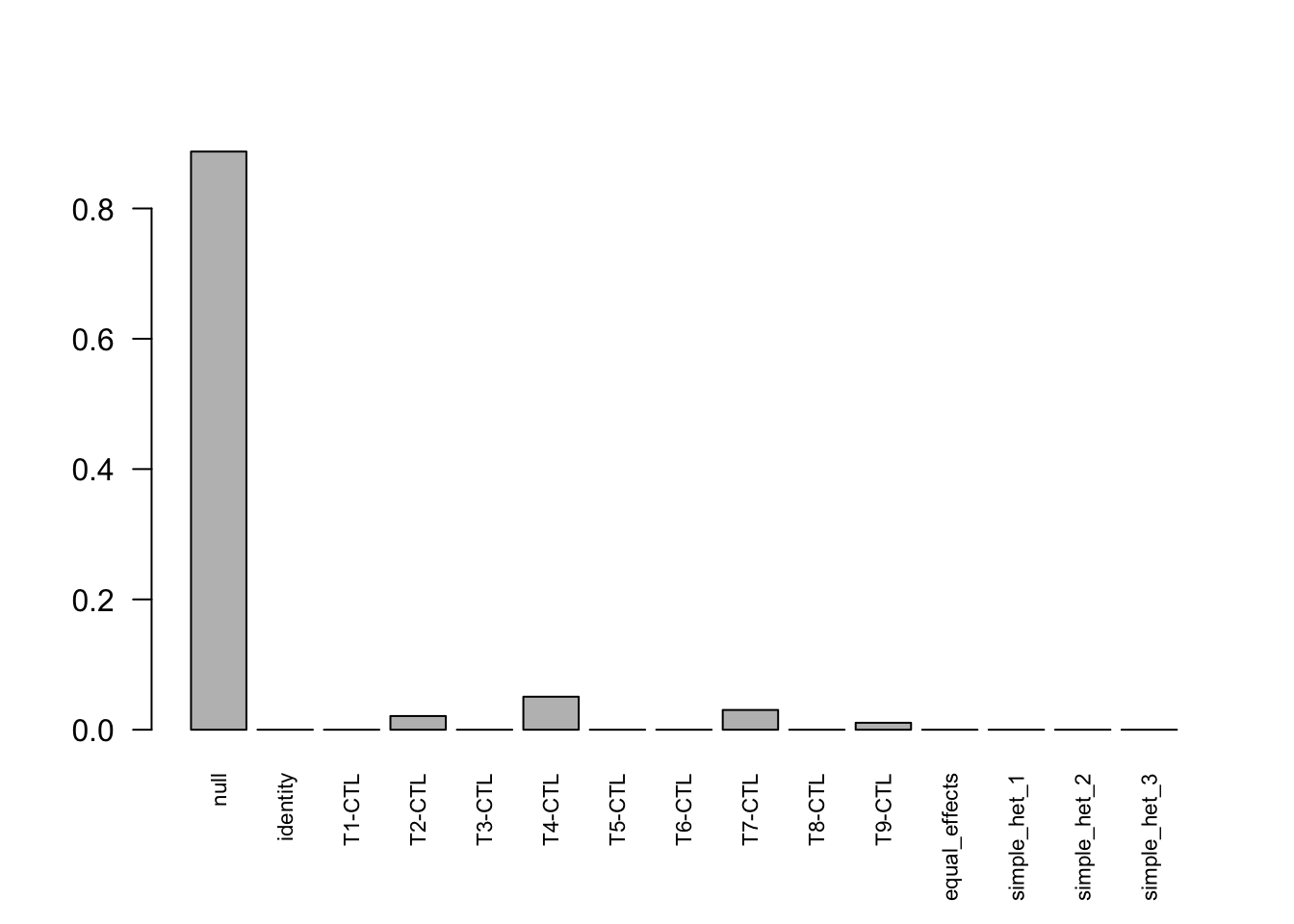

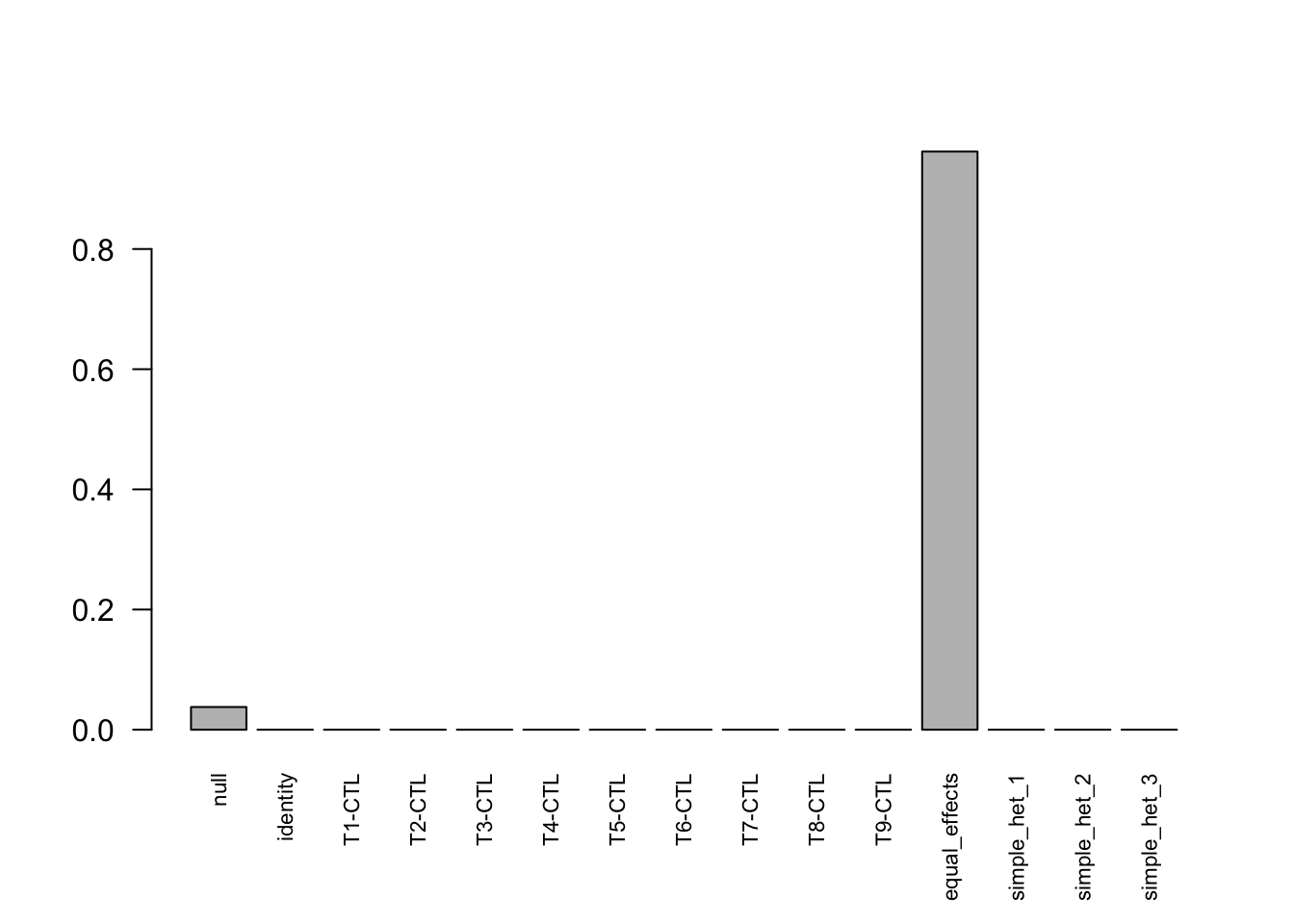

- Control group

- Without signal

- With signal

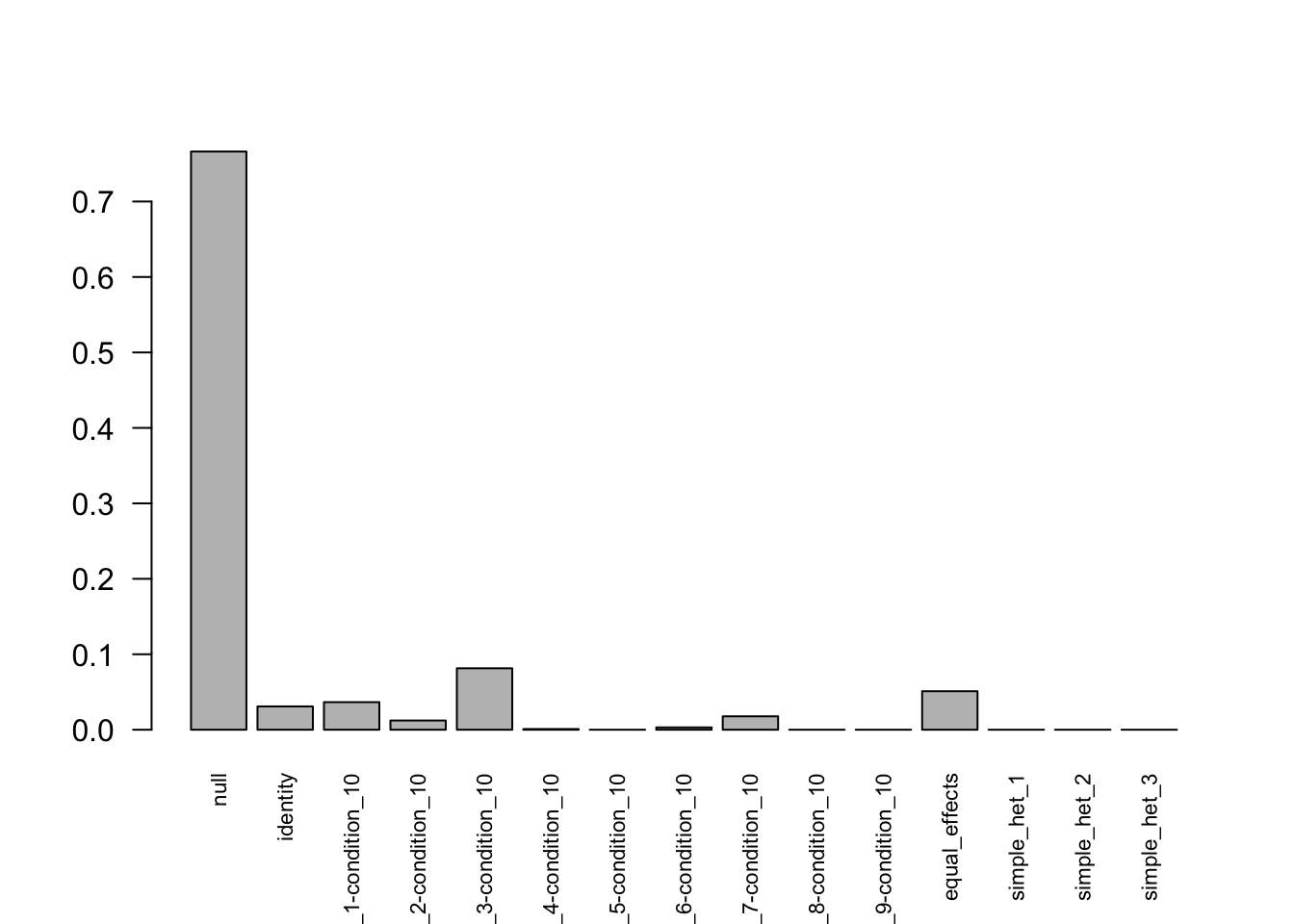

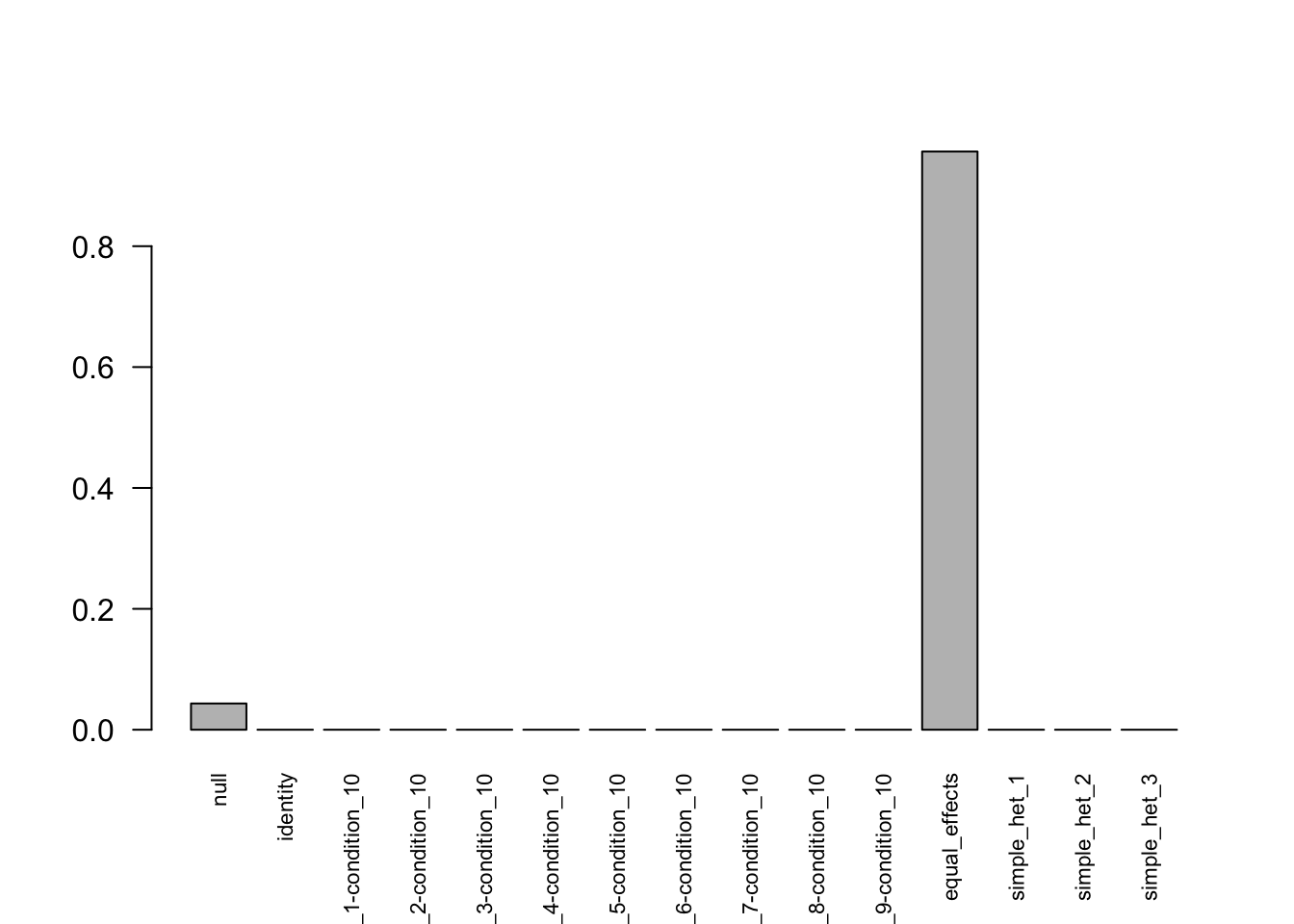

- Without control group

- Without deviations

- With deviations

MASH baseline code

Yuxin Zou

10/30/2018

Last updated: 2021-05-18

Checks: 7 0

Knit directory: Note/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20180529) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 9ef391c. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: analysis/.Rhistory

Ignored: analysis/figure/

Untracked files:

Untracked: analysis/Li&Stephens.Rmd

Untracked: data/locus1563.RDS

Unstaged changes:

Modified: analysis/LD_space.Rmd

Modified: analysis/amelialocus1563.Rmd

Modified: analysis/bibliography.bib

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/MASHbaselineCode.Rmd) and HTML (docs/MASHbaselineCode.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 9ef391c | zouyuxin | 2021-05-18 | wflow_publish(“analysis/MASHbaselineCode.Rmd”) |

| html | 43ebe81 | zouyuxin | 2021-03-30 | Build site. |

| Rmd | 221342a | zouyuxin | 2021-03-30 | wflow_publish(“analysis/MASHbaselineCode.Rmd”) |

| html | de67b36 | zouyuxin | 2018-12-11 | Build site. |

| Rmd | 86aed4d | zouyuxin | 2018-12-11 | wflow_publish(“analysis/MASHbaselineCode.Rmd”) |

library(mashr)Loading required package: ashrControl group

Without signal

set.seed(1)

data = sim_contrast1(nsamp = 10000, ncond = 10, err_sd = sqrt(0.5))

colnames(data$C) = colnames(data$Chat) = colnames(data$Shat) = c(paste0('T', 1:9), 'CTL')mash_data = mash_set_data(Bhat = data$Chat, Shat=data$Shat)

mash_data_L = mash_update_data(mash_data, ref=10)U.c = cov_canonical(mash_data_L)m = mash(mash_data_L, U.c, algorithm.version = 'R', optmethod = 'mixSQP') - Computing 10000 x 197 likelihood matrix.

- Likelihood calculations took 1.38 seconds.

- Fitting model with 197 mixture components.

- Model fitting took 7.37 seconds.

- Computing posterior matrices.

- Computation allocated took 0.16 seconds.length(get_significant_results(m))[1] 0# png(filename="../output/MASHbaselineFigures/SimpleContrastEqu_Cor.png", width = 700, height = 500)

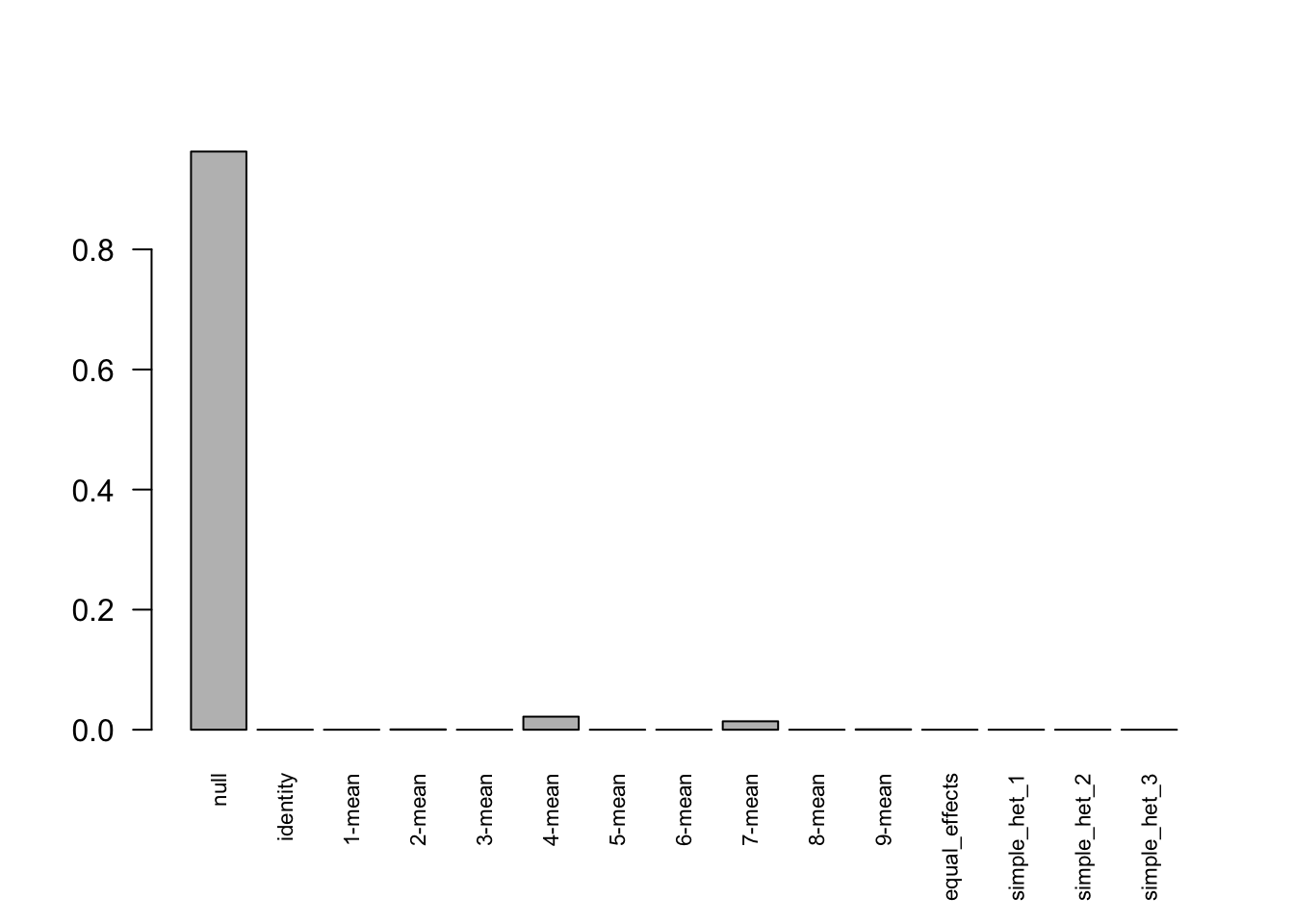

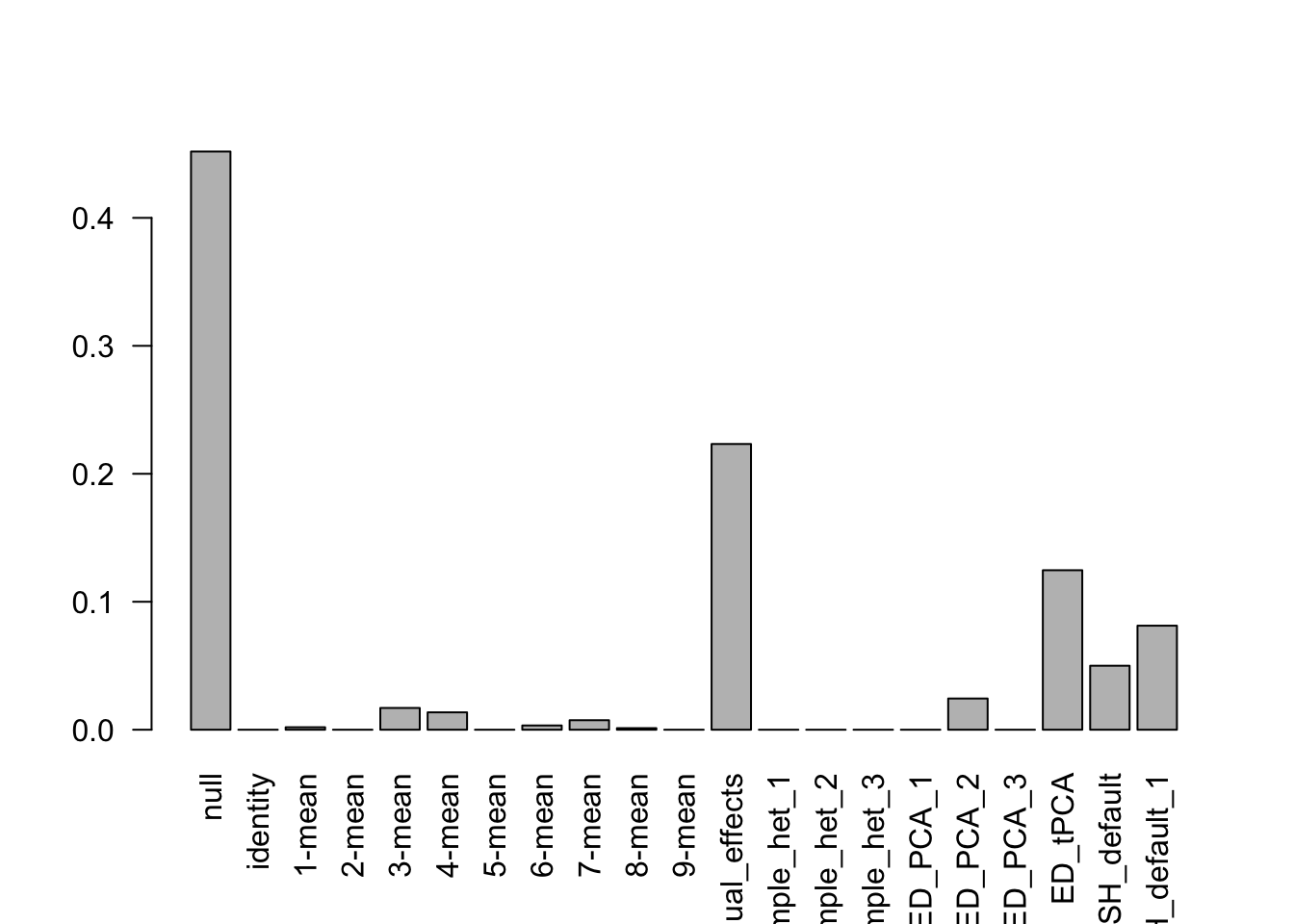

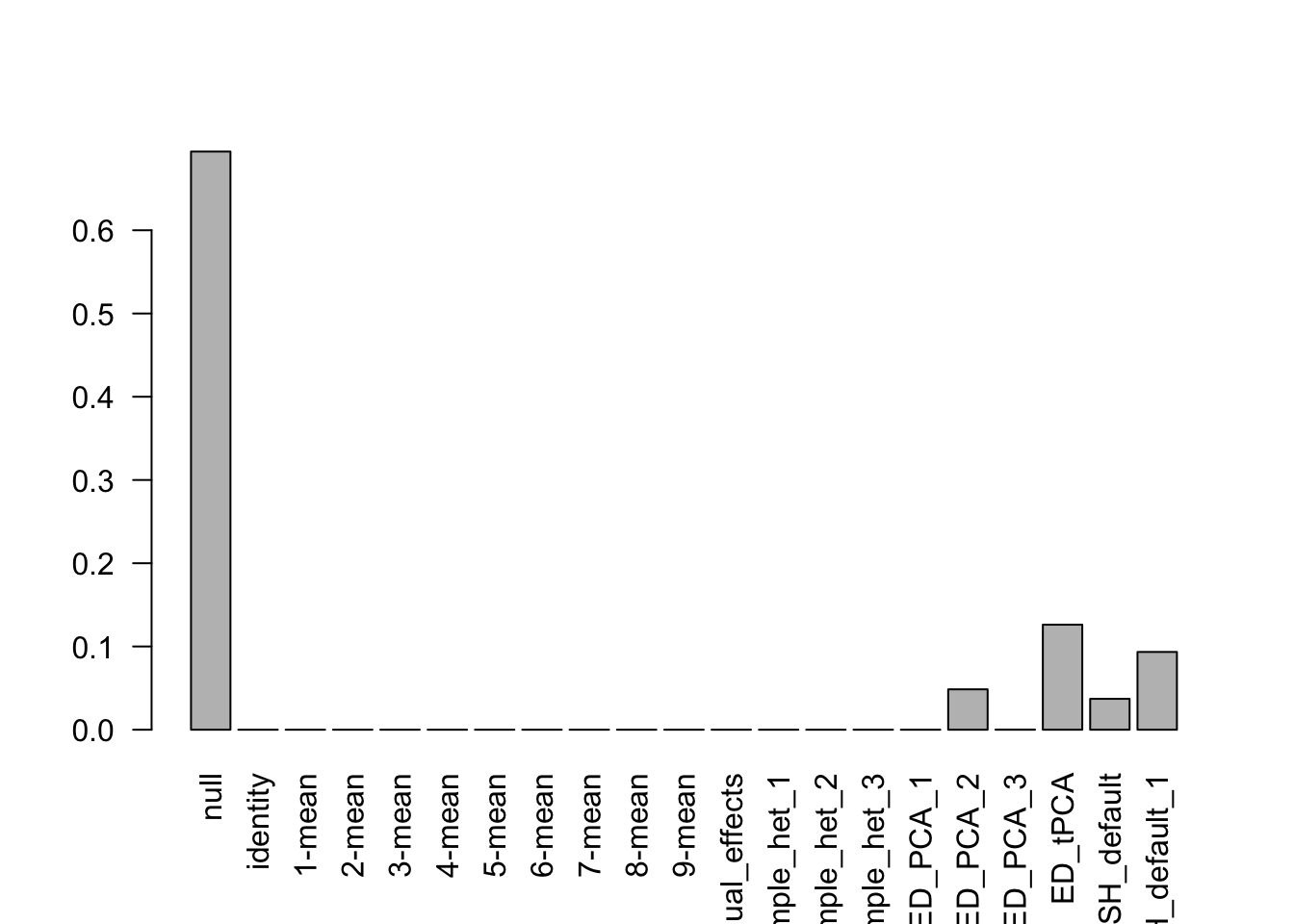

barplot(get_estimated_pi(m),las = 2, cex.names = 0.7)

Indep.data = mash_set_data(Bhat = mash_data_L$Bhat)

U.c = cov_canonical(Indep.data)

Indep.model = mash(Indep.data, U.c, algorithm.version = 'R', optmethod = 'mixSQP') - Computing 10000 x 197 likelihood matrix.

- Likelihood calculations took 1.11 seconds.

- Fitting model with 197 mixture components.

- Model fitting took 5.12 seconds.

- Computing posterior matrices.

- Computation allocated took 0.09 seconds.length(get_significant_results(Indep.model))[1] 3507# png(filename="../output/MASHbaselineFigures/SimpleContrastEqu_Wrong.png", width = 700, height = 500)

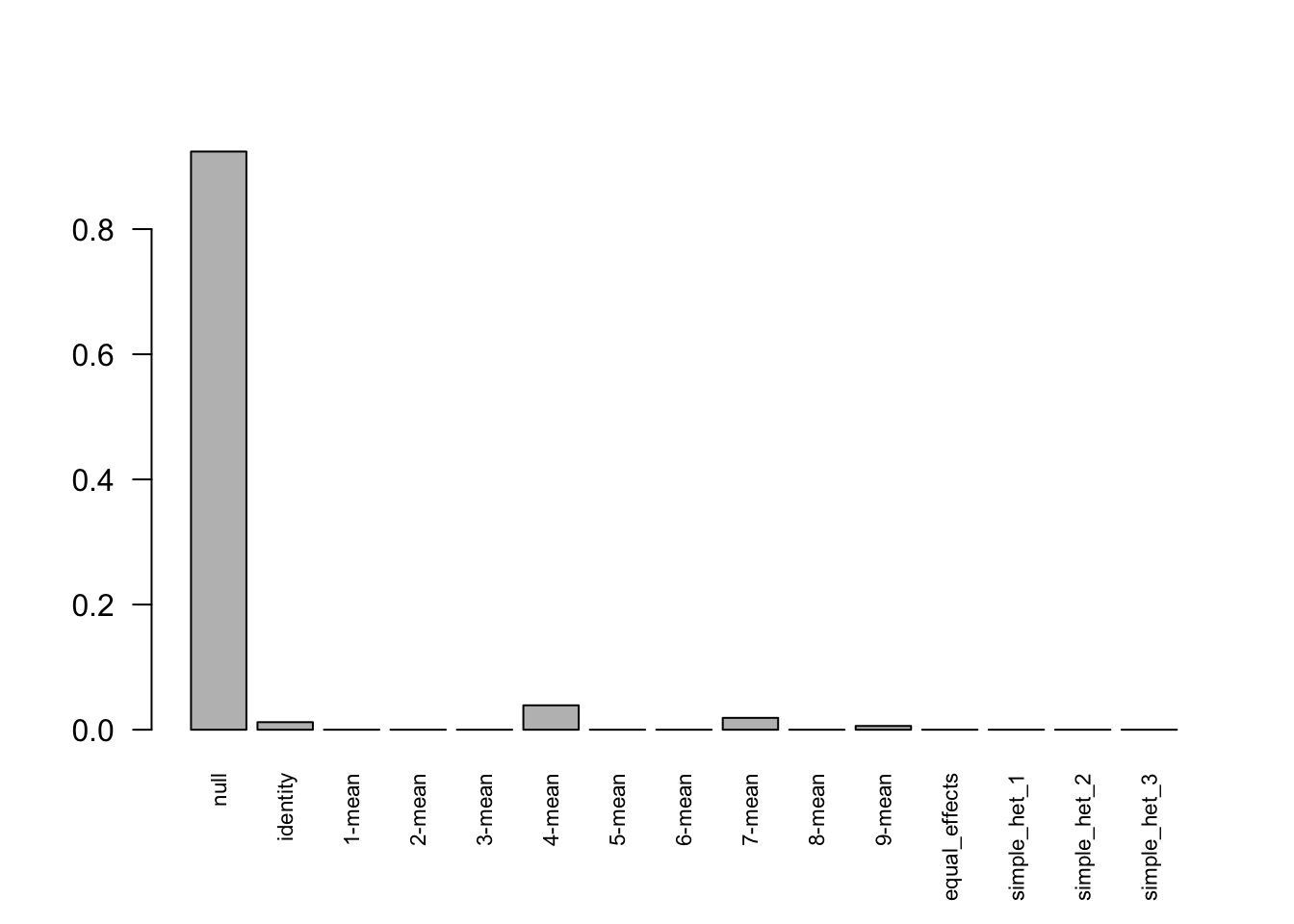

barplot(get_estimated_pi(Indep.model),las = 2, cex.names = 0.7)

median:

delta.median = t(apply(data$C, 1, function(x) x - median(x)))

deltahat.median = t(apply(data$Chat, 1, function(x) x - median(x)))

data.median = mash_set_data(deltahat.median, Shat = sqrt(0.5))

U.c = cov_canonical(data.median)

m.median = mash(data.median, U.c, algorithm.version = 'R', optmethod = 'mixSQP') - Computing 10000 x 211 likelihood matrix.

- Likelihood calculations took 1.32 seconds.

- Fitting model with 211 mixture components.

- Model fitting took 5.93 seconds.

- Computing posterior matrices.

- Computation allocated took 0.09 seconds.length(get_significant_results(m.median))[1] 0With signal

set.seed(1)

data = sim_contrast2(nsamp = 12000, ncond = 10)mash_data = mash_set_data(Bhat = data$Chat, Shat=data$Shat)

mash_data_L = mash_update_data(mash_data, ref=10)U.c = cov_canonical(mash_data_L)m = mash(mash_data_L, U.c, algorithm.version = 'R') - Computing 12000 x 211 likelihood matrix.

- Likelihood calculations took 1.21 seconds.

- Fitting model with 211 mixture components.

- Model fitting took 8.23 seconds.

- Computing posterior matrices.

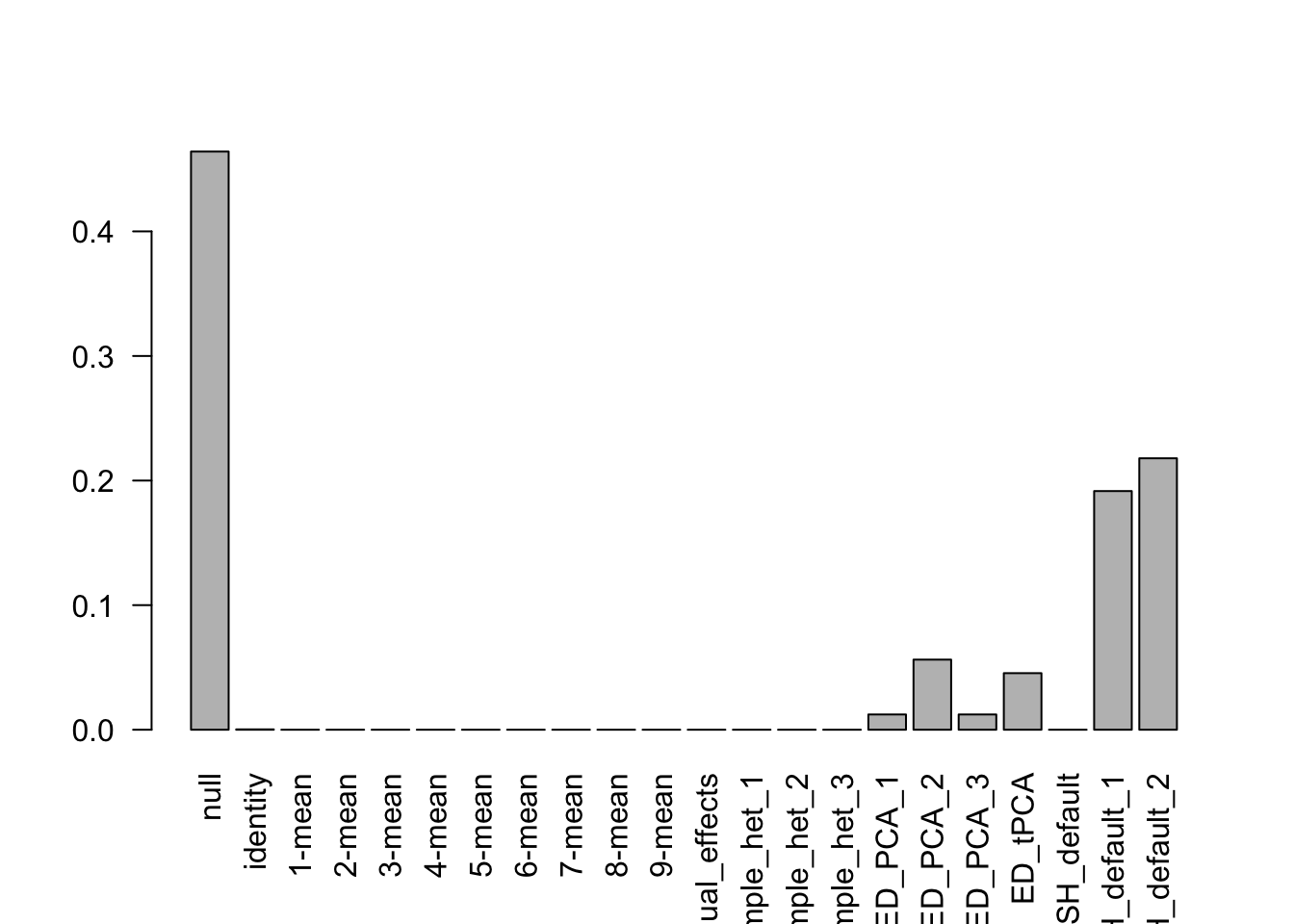

- Computation allocated took 0.16 seconds.length(get_significant_results(m))[1] 75barplot(get_estimated_pi(m),las = 2, cex.names = 0.7)

Indep.data = mash_set_data(Bhat = mash_data_L$Bhat)

U.c = cov_canonical(Indep.data)

Indep.model = mash(Indep.data, U.c, algorithm.version = 'R') - Computing 12000 x 211 likelihood matrix.

- Likelihood calculations took 1.08 seconds.

- Fitting model with 211 mixture components.

- Model fitting took 6.42 seconds.

- Computing posterior matrices.

- Computation allocated took 0.10 seconds.length(get_significant_results(Indep.model))[1] 4404barplot(get_estimated_pi(Indep.model),las = 2, cex.names = 0.7)

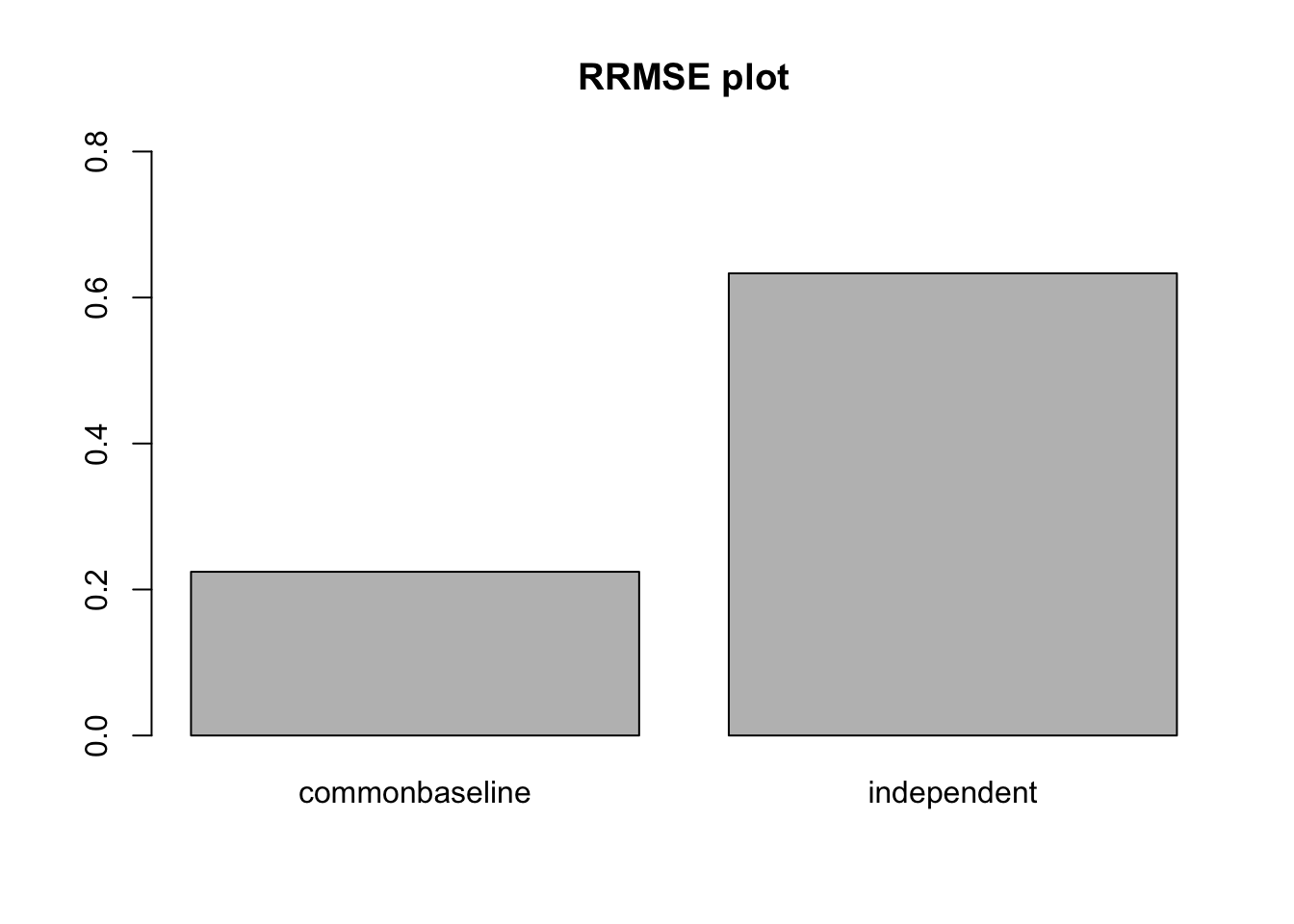

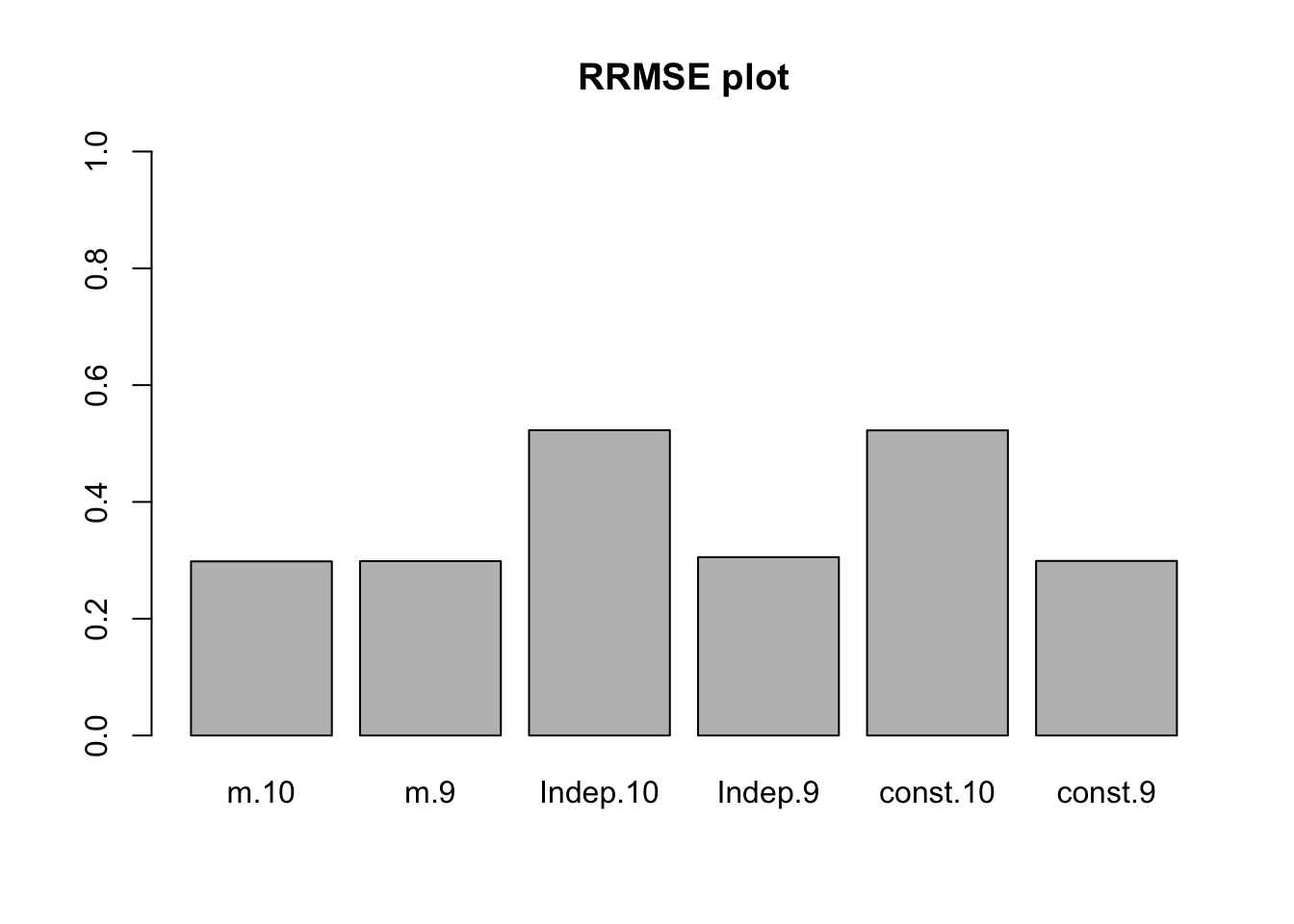

The RRMSE plot:

L = contrast_matrix(10, ref=10)

delta = data$C %*% t(L)

deltahat = data$Chat %*% t(L)

# png(filename="../output/MASHbaselineFigures/SimpleContrastNonNullRRMSE.png", width = 700, height = 500)

barplot(c(sqrt(mean((delta - m$result$PosteriorMean)^2)/mean((delta - deltahat)^2)),

sqrt(mean((delta - Indep.model$result$PosteriorMean)^2)/mean((delta - deltahat)^2))

), ylim=c(0,0.8), names.arg = c('commonbaseline','independent'), main = 'RRMSE plot')

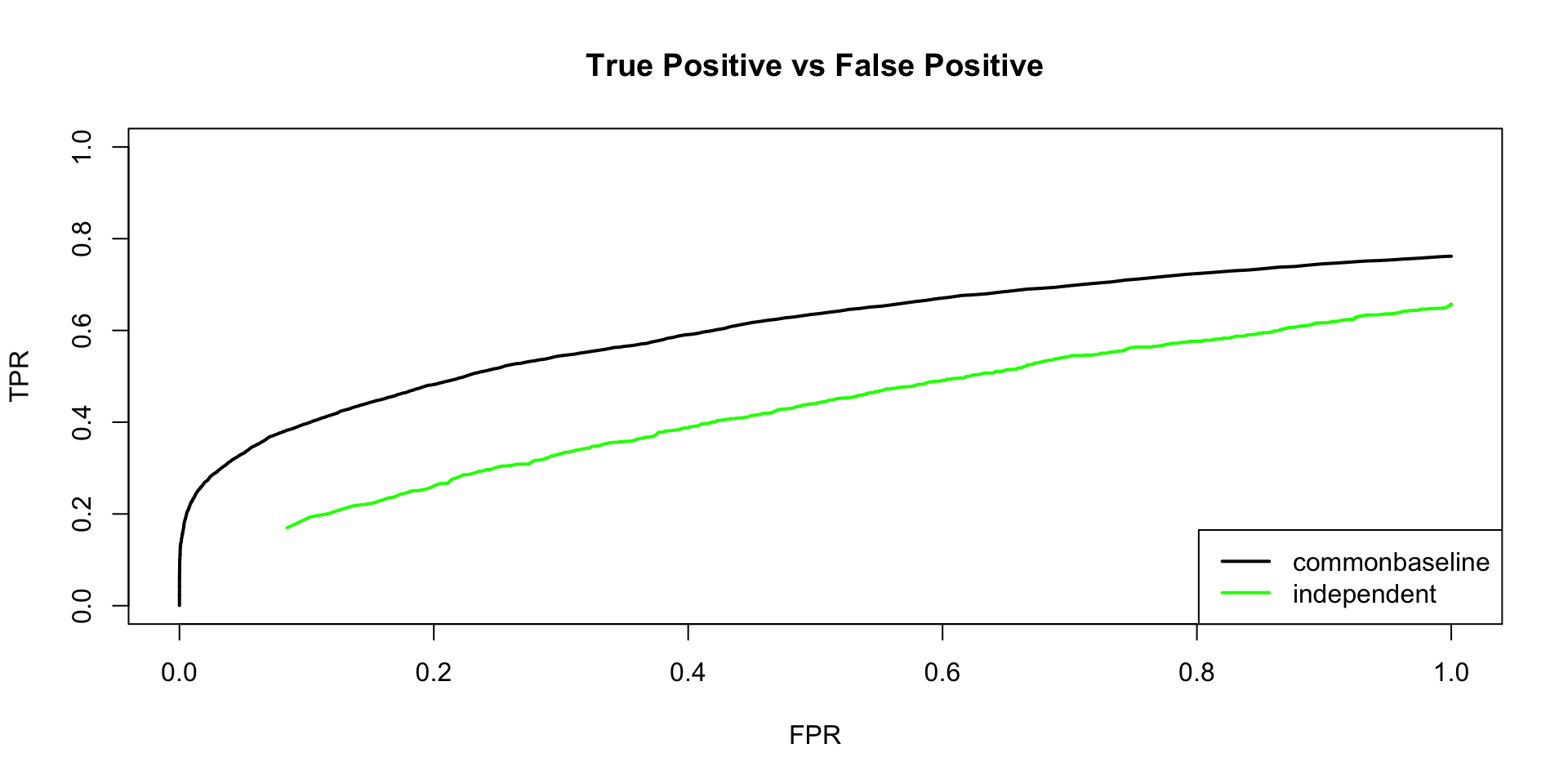

# dev.off()sign.test.contrast = as.matrix(delta)*m$result$PosteriorMean

sign.test.icor = as.matrix(delta)*Indep.model$result$PosteriorMean

thresh.seq = seq(0, 1, by=0.0005)[-1]

contrast = matrix(0,length(thresh.seq), 2)

icor = matrix(0,length(thresh.seq), 2)

colnames(contrast) = colnames(icor) = c('TPR', 'FPR')

for(t in 1:length(thresh.seq)){

contrast[t,] = c(sum(sign.test.contrast>0 & m$result$lfsr <= thresh.seq[t])/sum(delta!=0), sum(delta==0 & m$result$lfsr <=thresh.seq[t])/sum(delta==0))

icor[t,] = c(sum(sign.test.icor>0 & Indep.model$result$lfsr <= thresh.seq[t])/sum(delta!=0), sum(delta==0 & Indep.model$result$lfsr <=thresh.seq[t])/sum(delta==0))

}

median:

delta.median = t(apply(data$C, 1, function(x) x - median(x)))

deltahat.median = t(apply(data$Chat, 1, function(x) x - median(x)))

data.median = mash_set_data(deltahat.median, Shat = sqrt(0.5))

U.c = cov_canonical(data.median)

m.median = mash(data.median, U.c) - Computing 12000 x 226 likelihood matrix.

- Likelihood calculations took 0.51 seconds.

- Fitting model with 226 mixture components.

- Model fitting took 7.91 seconds.

- Computing posterior matrices.

- Computation allocated took 0.20 seconds.length(get_significant_results(m.median))[1] 68Without control group

Without deviations

set.seed(1)

data = sim_contrast1(nsamp = 10000, ncond = 10, err_sd = 1)

colnames(data$C) = colnames(data$Chat) = colnames(data$Shat) = 1:10mash_data = mash_set_data(Bhat = data$Chat, Shat=data$Shat)

mash_data_L = mash_update_data(mash_data, ref='mean')U.c = cov_canonical(mash_data_L)m = mash(mash_data_L, U.c, algorithm.version = 'R') - Computing 10000 x 197 likelihood matrix.

- Likelihood calculations took 0.75 seconds.

- Fitting model with 197 mixture components.

- Model fitting took 4.49 seconds.

- Computing posterior matrices.

- Computation allocated took 0.08 seconds.length(get_significant_results(m))[1] 0# png(filename = '../output/MASHbaselineFigures/MeanEqu_Cor.png', width = 700, height = 500)

barplot(get_estimated_pi(m),las = 2, cex.names = 0.7)

# dev.off()Indep.data = mash_set_data(Bhat = mash_data_L$Bhat, Shat = sqrt(9/10))

U.c = cov_canonical(Indep.data)

Indep.model = mash(Indep.data, U.c, algorithm.version = 'R') - Computing 10000 x 197 likelihood matrix.

- Likelihood calculations took 0.84 seconds.

- Fitting model with 197 mixture components.

- Model fitting took 5.20 seconds.

- Computing posterior matrices.

- Computation allocated took 0.10 seconds.length(get_significant_results(Indep.model))[1] 0# png(filename = '../output/MASHbaselineFigures/MeanEqu_Wrong.png', width = 700, height = 500)

barplot(get_estimated_pi(Indep.model),las = 2, cex.names = 0.7)

# dev.off()median:

delta.median = t(apply(data$C, 1, function(x) x - median(x)))

deltahat.median = t(apply(data$Chat, 1, function(x) x - median(x)))

data.median = mash_set_data(deltahat.median, Shat = 1)

U.c = cov_canonical(data.median)

m.median = mash(data.median, U.c) - Computing 10000 x 211 likelihood matrix.

- Likelihood calculations took 0.39 seconds.

- Fitting model with 211 mixture components.

- Model fitting took 5.68 seconds.

- Computing posterior matrices.

- Computation allocated took 0.11 seconds.length(get_significant_results(m.median))[1] 0With deviations

generate_data = function(n, p, V, Utrue, err_sd=0.01, pi=NULL){

if (is.null(pi)) {

pi = rep(1, length(Utrue)) # default to uniform distribution

}

assertthat::are_equal(length(pi), length(Utrue))

for (j in 1:length(Utrue)) {

assertthat::are_equal(dim(Utrue[j]), c(p, p))

}

pi <- pi / sum(pi) # normalize pi to sum to one

which_U <- sample(1:length(pi), n, replace=TRUE, prob=pi)

Beta = matrix(0, nrow=n, ncol=p)

for(i in 1:n){

Beta[i,] = MASS::mvrnorm(1, rep(0, p), Utrue[[which_U[i]]])

}

Shat = matrix(err_sd, nrow=n, ncol=p, byrow = TRUE)

E = MASS::mvrnorm(n, rep(0, p), Shat[1,]^2 * V)

Bhat = Beta + E

return(list(B = Beta, Bhat=Bhat, Shat = Shat, whichU = which_U))

}set.seed(1)

n = 500

R = 10

err_sd = 1ncond = R; nsamp = n

b = rnorm(nsamp, sd=3)

B.all = matrix(rep(b, ncond), nrow = nsamp, ncol = ncond)

B.zero = matrix(0, nrow = nsamp, ncol = ncond)

B.two = B.zero

b2 = rnorm(nsamp, sd=3)

B.two[, 1:2] = b2

B.last = B.zero

b3 = rnorm(nsamp, sd=3)

B.last[, R] = b3

B = rbind(B.zero, B.all, B.two, B.last)

Shat = matrix(err_sd, nrow = nrow(B), ncol = ncol(B))

E = matrix(rnorm(length(Shat), mean = 0, sd = Shat), nrow = nrow(B),

ncol = ncol(B))

Bhat = B + E

row_ids = paste0("effect_", 1:nrow(B))

col_ids = 1:ncol(B)

rownames(B) = row_ids

colnames(B) = col_ids

rownames(Bhat) = row_ids

colnames(Bhat) = col_ids

rownames(Shat) = row_ids

colnames(Shat) = col_ids

simdata.10 = list(B = B, Bhat = Bhat, Shat = Shat)

null.ind = 1:1000

simdata.9 = simdata.10

simdata.9$B = simdata.10$B[,c(1:8,10,9)]

simdata.9$Bhat = simdata.10$Bhat[,c(1:8,10,9)]

simdata.9$Shat = simdata.10$Shat[,c(1:8,10,9)]

# true cov

Utrue1 = matrix(0,R,R)

Utrue1[1:2,1:2] = 9

Utrue2 = matrix(0,R,R)

Utrue2[R,R] = 9

L.10 = contrast_matrix(R, 'mean')

L.9 = matrix(-1/R, R, R)

diag(L.9) = (R-1)/R

L.9 = L.9[c(1:8,10),]

Ulist.10 = list(U1 = L.10 %*% Utrue1 %*% t(L.10), U2 = L.10 %*% Utrue2 %*% t(L.10))

Ulist.9 = list(U1 = L.9 %*% Utrue1 %*% t(L.9), U2 = L.9 %*% Utrue2 %*% t(L.9))data.10 = mash_set_data(simdata.10$Bhat, simdata.10$Shat)

data.9 = mash_set_data(simdata.9$Bhat, simdata.9$Shat)Model 1

data.L.10 = mash_update_data(data.10, ref = 'mean')

U.c = cov_canonical(data.L.10)

m.1by1 = mash_1by1(data.L.10)

strong = get_significant_results(m.1by1)

U.pca = cov_pca(data.L.10, 3,subset=strong)

U.flash = cov_flash(data.L.10, subset = strong)

U.ed = cov_ed(data.L.10, c(U.pca, U.flash), subset=strong)

m.10 = mash(data.L.10, c(U.c,U.ed), algorithm.version = 'R') - Computing 2000 x 321 likelihood matrix.

- Likelihood calculations took 0.26 seconds.

- Fitting model with 321 mixture components.

- Model fitting took 3.80 seconds.

- Computing posterior matrices.

- Computation allocated took 0.04 seconds.# m.10 = mash(data.L.10, Ulist.10, algorithm.version = 'R')Model 2

data.L.9 = mash_update_data(data.9, ref = 'mean')

U.c = cov_canonical(data.L.9)

m.1by1 = mash_1by1(data.L.9)

strong = get_significant_results(m.1by1)

U.pca = cov_pca(data.L.9,3,subset=strong)

U.flash = cov_flash(data.L.9, subset = strong)

U.ed = cov_ed(data.L.9, c(U.pca, U.flash), subset=strong)

m.9 = mash(data.L.9, c(U.c,U.ed), algorithm.version = 'R') - Computing 2000 x 358 likelihood matrix.

- Likelihood calculations took 0.30 seconds.

- Fitting model with 358 mixture components.

- Model fitting took 4.50 seconds.

- Computing posterior matrices.

- Computation allocated took 0.03 seconds.#m.9 = mash(data.L.9, Ulist.9, algorithm.version = 'R')Model 3

L = contrast_matrix(R, ref='mean')

Indep.data.10 = mash_set_data(data.10$Bhat %*% t(L), Shat = sqrt(9/10))

U.c = cov_canonical(Indep.data.10)

m.1by1 = mash_1by1(Indep.data.10)

strong = get_significant_results(m.1by1)

U.pca = cov_pca(Indep.data.10,3,subset=strong)

U.flash = cov_flash(Indep.data.10, subset = strong)

U.ed = cov_ed(Indep.data.10, c(U.pca,U.flash), subset=strong)

A = rbind(diag(R-1),-1)

Indep.10 = mash(Indep.data.10, c(U.c,U.ed), algorithm.version = 'R', A=A) - Computing 2000 x 321 likelihood matrix.

- Likelihood calculations took 0.27 seconds.

- Fitting model with 321 mixture components.

- Model fitting took 3.88 seconds.

- Computing posterior matrices.

- Computation allocated took 0.03 seconds.# A = rbind(diag(R-1),-1)

# Indep.10 = mash(Indep.data.10, Ulist.10, algorithm.version = 'R', A=A)Model 4

L = contrast_matrix(R, ref='mean')

Indep.data.9 = mash_set_data(data.9$Bhat %*% t(L), Shat = sqrt(9/10))

U.c = cov_canonical(Indep.data.9)

m.1by1 = mash_1by1(Indep.data.9)

strong = get_significant_results(m.1by1)

U.pca = cov_pca(Indep.data.9,3,subset=strong)

U.flash = cov_flash(Indep.data.9, subset = strong)

U.ed = cov_ed(Indep.data.9, c(U.pca,U.flash), subset=strong)

A = rbind(diag(R-1),-1)

Indep.9 = mash(Indep.data.9, c(U.c,U.ed), algorithm.version = 'R', A=A) - Computing 2000 x 358 likelihood matrix.

- Likelihood calculations took 0.30 seconds.

- Fitting model with 358 mixture components.

- Model fitting took 4.88 seconds.

- Computing posterior matrices.

- Computation allocated took 0.04 seconds.# A = rbind(diag(R-1),-1)

# Indep.9 = mash(Indep.data.9, Ulist.9, algorithm.version = 'R', A=A)Model 5

L = contrast_matrix(R, ref='mean')

const.data.10 = mash_set_data(data.10$Bhat %*% t(L), Shat = 1)

U.c = cov_canonical(const.data.10)

m.1by1 = mash_1by1(const.data.10)

strong = get_significant_results(m.1by1)

U.pca = cov_pca(const.data.10,3,subset=strong)

U.flash = cov_flash(const.data.10, subset = strong)

U.ed = cov_ed(const.data.10, c(U.pca, U.flash), subset=strong)

A = rbind(diag(R-1),-1)

Const.10 = mash(const.data.10, c(U.c,U.ed), algorithm.version = 'R', A=A) - Computing 2000 x 321 likelihood matrix.

- Likelihood calculations took 0.26 seconds.

- Fitting model with 321 mixture components.

- Model fitting took 3.87 seconds.

- Computing posterior matrices.

- Computation allocated took 0.03 seconds.# A = rbind(diag(R-1),-1)

# Const.10 = mash(const.data.10, Ulist.10, algorithm.version = 'R', A=A)Model 6

L = contrast_matrix(R, ref='mean')

const.data.9 = mash_set_data(data.9$Bhat %*% t(L), Shat = 1)

U.c = cov_canonical(const.data.9)

m.1by1 = mash_1by1(const.data.9)

strong = get_significant_results(m.1by1)

U.pca = cov_pca(const.data.9,3,subset=strong)

U.flash = cov_flash(const.data.9, subset = strong)

U.ed = cov_ed(const.data.9, c(U.pca, U.flash), subset=strong)

A = rbind(diag(R-1),-1)

Const.9 = mash(const.data.9, c(U.c,U.ed), algorithm.version = 'R', A=A) - Computing 2000 x 358 likelihood matrix.

- Likelihood calculations took 0.32 seconds.

- Fitting model with 358 mixture components.

- Model fitting took 4.93 seconds.

- Computing posterior matrices.

- Computation allocated took 0.03 seconds.# A = rbind(diag(R-1),-1)

# Const.9 = mash(const.data.9, Ulist.9, algorithm.version = 'R', A=A)Likelihood

model 1 model 2 model 3 model 4 model 5 model 6

log likelihood -25321 -25329.51 -25994.5 -26669.72 -26053.95 -26744.97

# significance 361 357.00 226.0 395.00 208.00 355.00

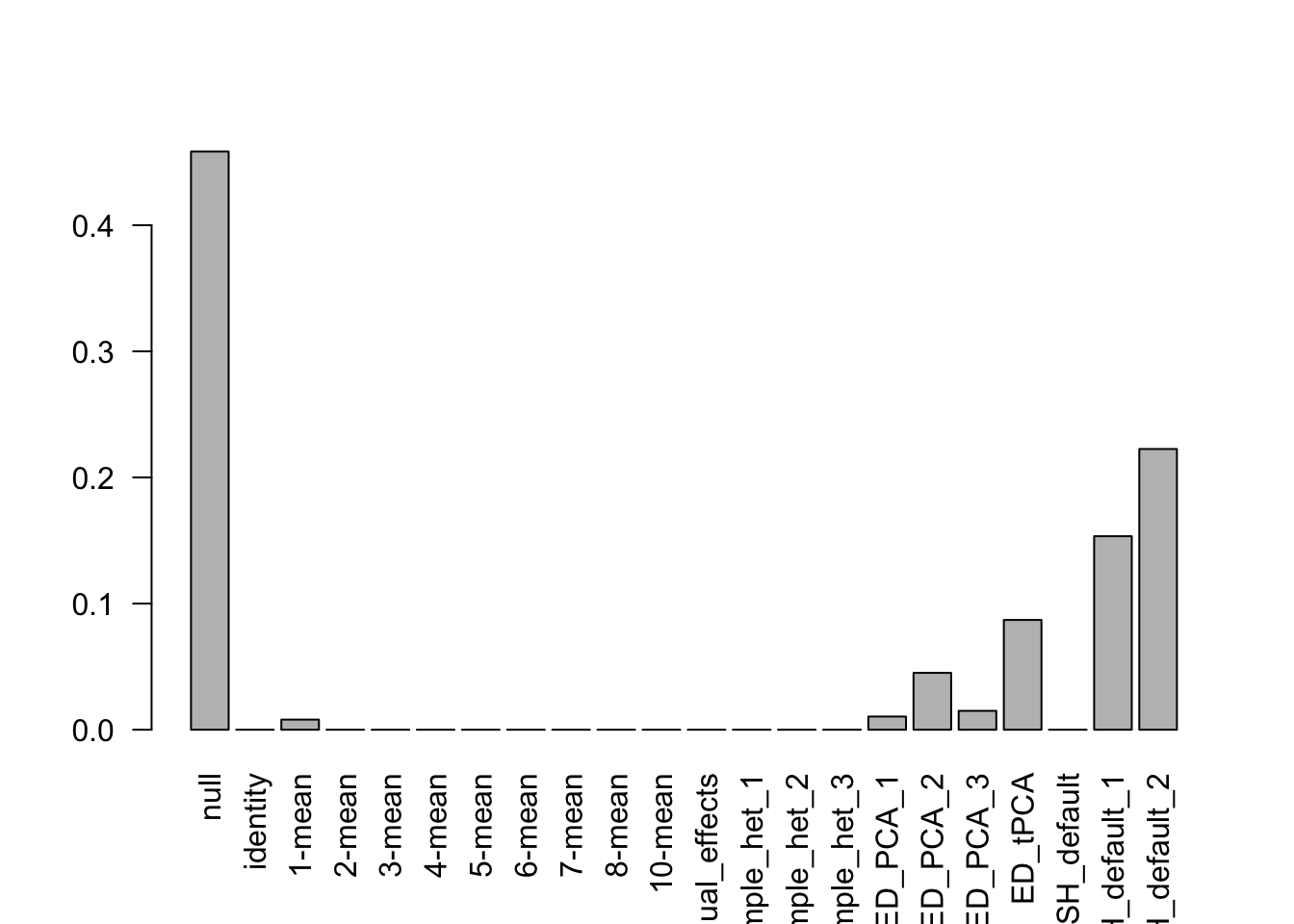

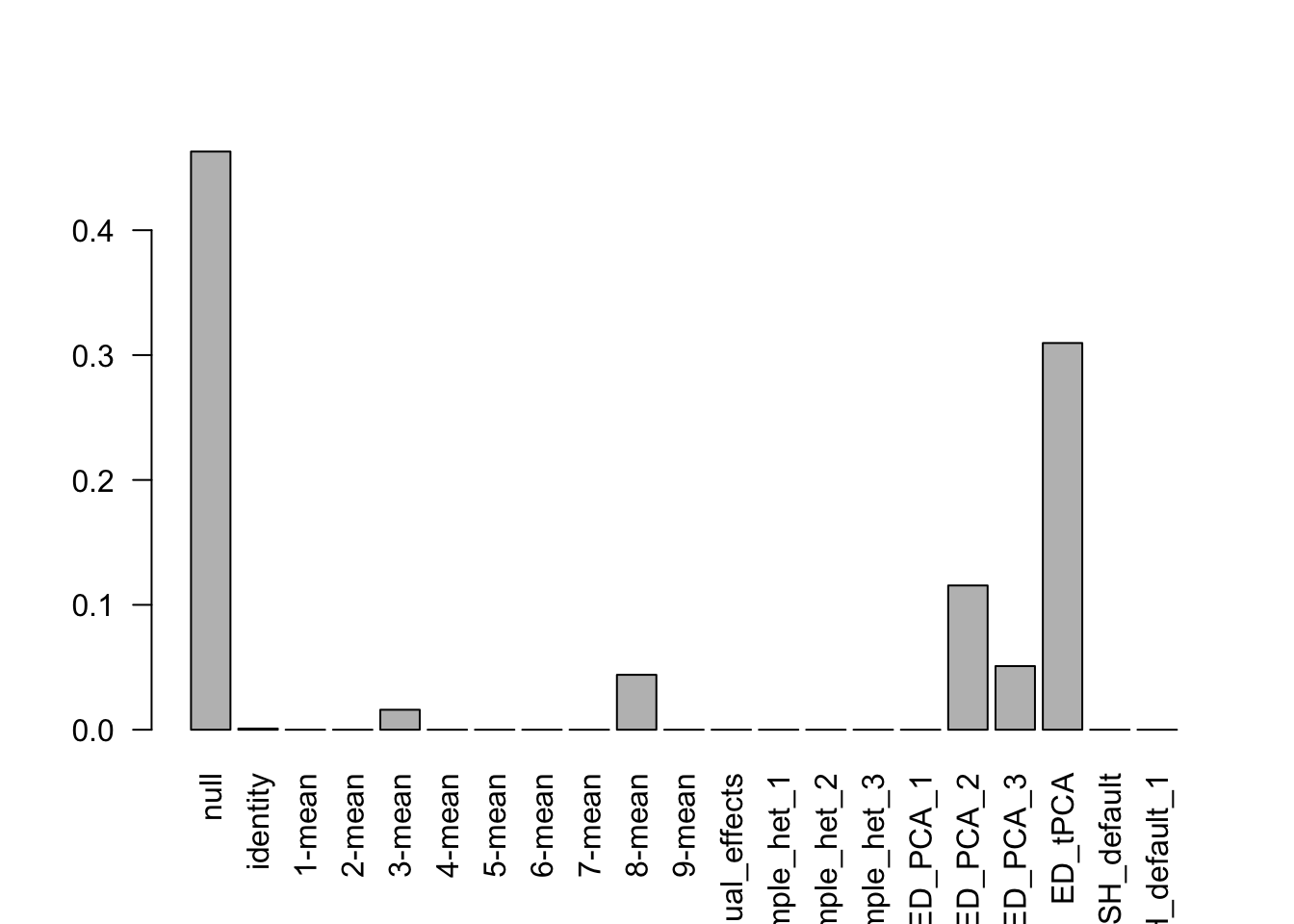

# False positive 3 3.00 2.0 4.00 0.00 3.00barplot(get_estimated_pi(m.10), las=2)

barplot(get_estimated_pi(m.9), las=2)

barplot(get_estimated_pi(Indep.10), las=2)

barplot(get_estimated_pi(Indep.9), las=2)

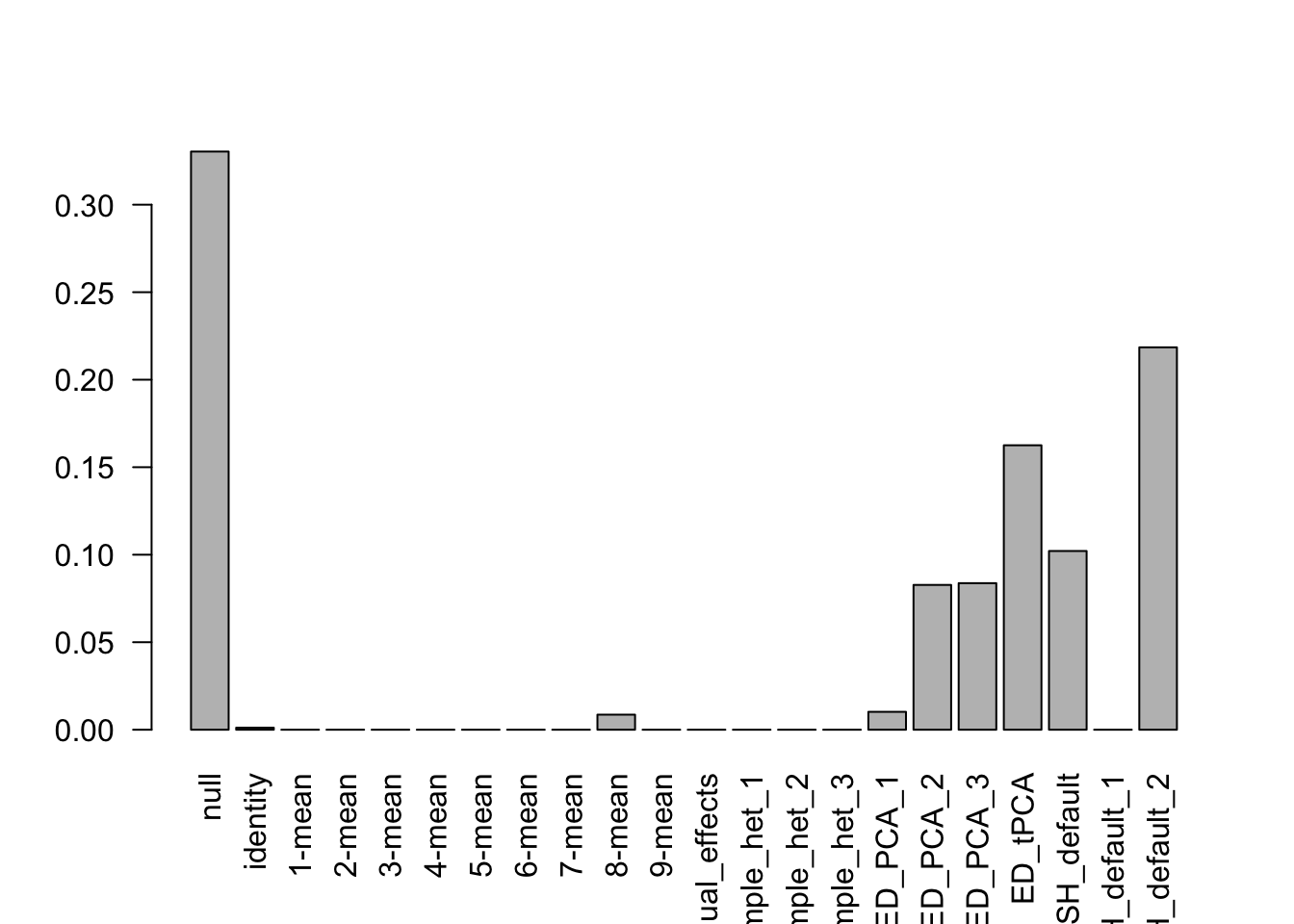

barplot(get_estimated_pi(Const.10), las=2)

barplot(get_estimated_pi(Const.9), las=2)

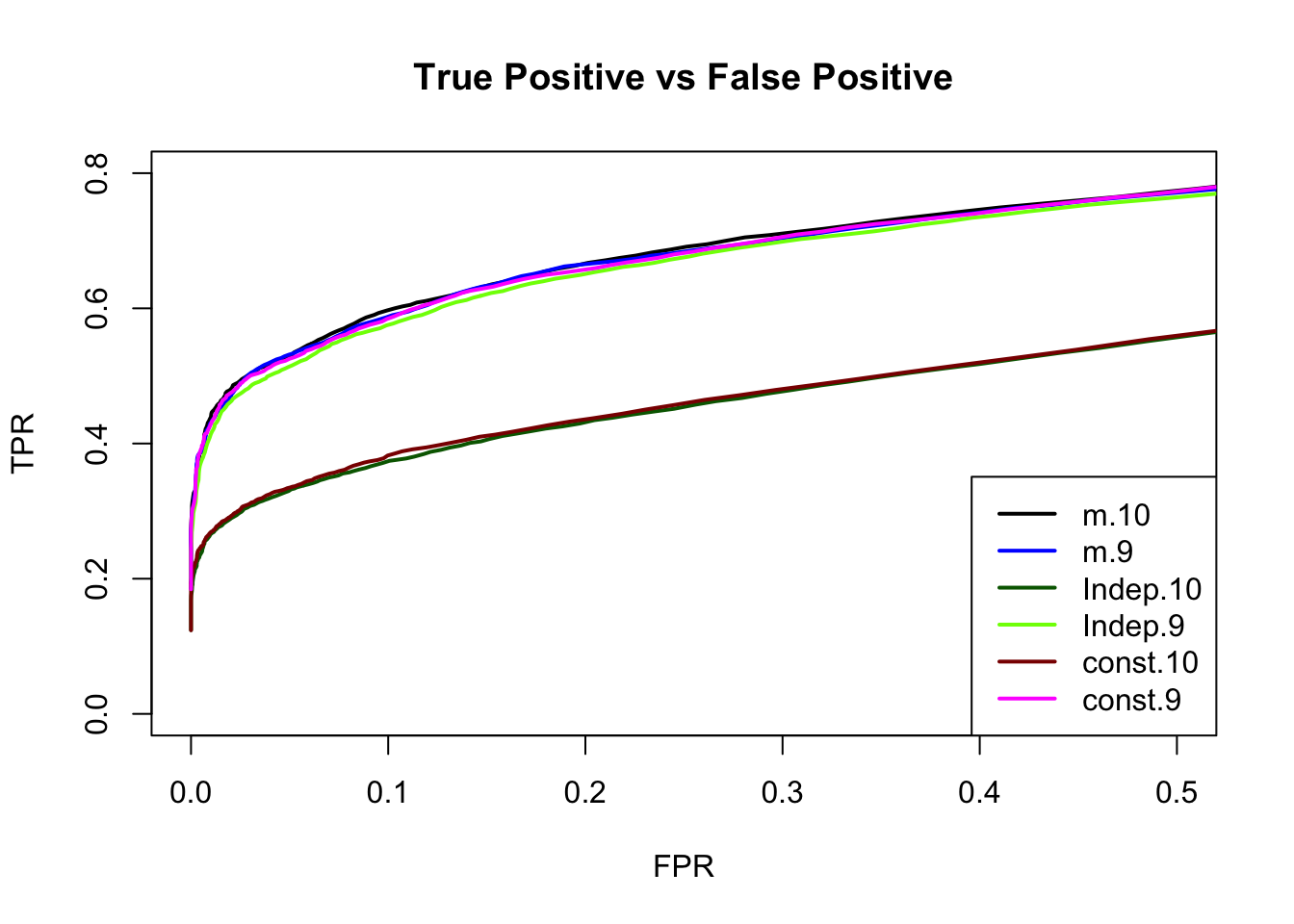

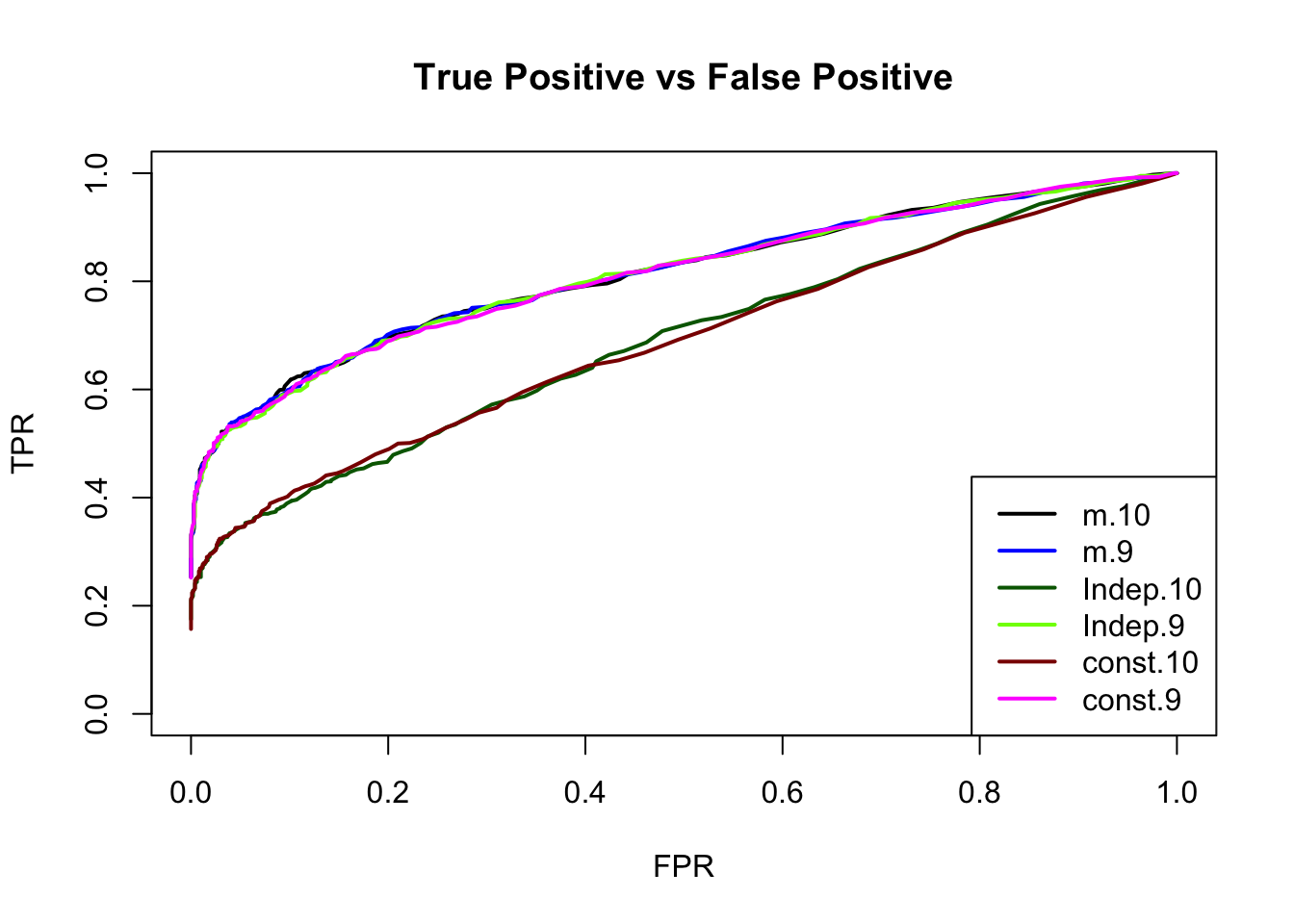

Each effect is treated as a single discovery across all conditions

thresh.seq = seq(0, 1, by=0.005)[-1]

contrast.10 = contrast.9 = indep.10 = indep.9 = const.10 = const.9 = matrix(0,length(thresh.seq), 2)

colnames(contrast.10) = colnames(contrast.9) = colnames(indep.10) = colnames(indep.9) = colnames(const.10) = colnames(const.9) = c('TPR', 'FPR')

for(t in 1:length(thresh.seq)){

contrast.10[t,] = c(sum(get_significant_results(m.10, thresh.seq[t]) > 1000)/1000,

sum(get_significant_results(m.10, thresh.seq[t]) <= 1000)/1000)

contrast.9[t,] = c(sum(get_significant_results(m.9, thresh.seq[t]) > 1000)/1000,

sum(get_significant_results(m.9, thresh.seq[t]) <= 1000)/1000)

indep.10[t,] = c(sum(get_significant_results(Indep.10, thresh.seq[t]) > 1000)/1000,

sum(get_significant_results(Indep.10, thresh.seq[t]) <= 1000)/1000)

indep.9[t,] = c(sum(get_significant_results(Indep.9, thresh.seq[t]) > 1000)/1000,

sum(get_significant_results(Indep.9, thresh.seq[t]) <= 1000)/1000)

const.10[t,] = c(sum(get_significant_results(Const.10, thresh.seq[t]) > 1000)/1000,

sum(get_significant_results(Const.10, thresh.seq[t]) <= 1000)/1000)

const.9[t,] = c(sum(get_significant_results(Const.9, thresh.seq[t]) > 1000)/1000,

sum(get_significant_results(Const.9, thresh.seq[t]) <= 1000)/1000)

}

| Version | Author | Date |

|---|---|---|

| 43ebe81 | zouyuxin | 2021-03-30 |

delta.10.median = t(apply(simdata.10$B, 1, function(x) x-median(x)))

deltahat.10.median = t(apply(simdata.10$Bhat, 1, function(x) x-median(x)))

data.10.median = mash_set_data(Bhat = deltahat.10.median, Shat = sqrt(0.5))

U.c = cov_canonical(data.10.median)

m.1by1 = mash_1by1(data.10.median)

strong = get_significant_results(m.1by1)

U.pca = cov_pca(data.10.median,3,subset=strong)

U.flash = cov_flash(data.10.median, subset=strong)

U.ed = cov_ed(data.10.median, c(U.pca, U.flash), subset=strong)

m.median.10 = mash(data.10.median, c(U.c,U.ed)) - Computing 2000 x 397 likelihood matrix.

- Likelihood calculations took 0.13 seconds.

- Fitting model with 397 mixture components.

- Model fitting took 6.86 seconds.

- Computing posterior matrices.

- Computation allocated took 0.04 seconds.sum(get_significant_results(m.median.10) < 1001)[1] 380

sessionInfo()R version 4.0.3 (2020-10-10)

Platform: x86_64-apple-darwin17.0 (64-bit)

Running under: macOS Big Sur 10.16

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.0/Resources/lib/libRblas.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.0/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] mashr_0.2.45 ashr_2.2-51 workflowr_1.6.2

loaded via a namespace (and not attached):

[1] softImpute_1.4 tidyselect_1.1.0 xfun_0.22 reshape2_1.4.4

[5] purrr_0.3.4 splines_4.0.3 lattice_0.20-41 colorspace_2.0-1

[9] vctrs_0.3.8 generics_0.1.0 htmltools_0.5.1.1 yaml_2.2.1

[13] utf8_1.2.1 rlang_0.4.11 mixsqp_0.3-46 later_1.1.0.1

[17] pillar_1.6.0 glue_1.4.2 DBI_1.1.1 trust_0.1-8

[21] plyr_1.8.6 lifecycle_1.0.0 stringr_1.4.0 munsell_0.5.0

[25] gtable_0.3.0 mvtnorm_1.1-1 evaluate_0.14 knitr_1.31

[29] httpuv_1.5.5 invgamma_1.1 irlba_2.3.3 fansi_0.4.2

[33] highr_0.8 Rcpp_1.0.6 promises_1.2.0.1 scales_1.1.1

[37] rmeta_3.0 horseshoe_0.2.0 truncnorm_1.0-8 abind_1.4-5

[41] fs_1.5.0 deconvolveR_1.2-1 flashr_0.6-7 ggplot2_3.3.3

[45] digest_0.6.27 stringi_1.5.3 dplyr_1.0.5 ebnm_0.1-36

[49] grid_4.0.3 rprojroot_2.0.2 tools_4.0.3 magrittr_2.0.1

[53] tibble_3.1.1 crayon_1.4.1 whisker_0.4 pkgconfig_2.0.3

[57] ellipsis_0.3.2 Matrix_1.3-2 SQUAREM_2021.1 assertthat_0.2.1

[61] rmarkdown_2.7 R6_2.5.0 git2r_0.28.0 compiler_4.0.3